intro

ioioioio.eu is a development and project-site. it contains material about content delivery, streaming cluster-technology and cloud-behaviour, build with open source tools. the main idea is, to bring the content as static or passive item to the GUI and take usage of dynamic procedures very rarely. its simply a playground and sandbox for items, issues and stuff, which is not related to a special topic, but needs a place to rule the world with the show of force. some stuff is mickey-mouse like and were build during development processes of something bigger, which should be on-line or in production somewhere else.

scope

this landing page introduces the main reasons ( aka topics ), for this site.

one can see services, technology, projects, staff and meta-data between the visible content.

to keep loading the inherit content as fast as possible, the site is done with a single page.

bootstrap is used to fullfil that request, additionally some further libs are included, to

make it even more comfy in here. this page is changed very rarely, like once a year.

therefore, consult subpages and subdomains for further informations. some gaps in here

are not necessarilly needed, but it will take some time and ideas, to push something as content

into the resulting snippets.

optimizations with less framework and node.js are still under construction, simplifying the

css and js stack should boost the page even more.

i know, flash is not the solution :/

picture carousel, the 11th

the animated thing above is called piecemaker. its some java-script, a xml-file to deploy the play-list-files and general settings and the types of transitions to made to a flash-application, that realize the visual changes. the actual version is 2, i just took the freshest one from the developer-site , as the version before suffered from my page-design on the css-file, with that layout. all images, videos and flash-movies inside the piecemaker should be formated to the same size, that fits the flash-resolution given with 'params'. in that example, the main-resolution is 600x360 gives the button a chance to appear. the image-size-setting inside is 840x320 gives the menue some space.that presentation of pictures is another way to bring some life to this task. sliding right or left, denoising the effects are mostly the same, but we will feed that show soon with more interesting material.

ioioioio.eu is a development and project-site. it contains material about content delivery, streaming cluster-technology and cloud-behaviour, build with open source tools. the main idea is, to bring the content as static or passive item to the GUI and take usage of dynamic procedures very rarely. its simply a playground and sandbox for items, issues and stuff, which is not related to a special topic, but needs a place to rule the world with the show of force. some stuff is mickey-mouse like and were build during development processes of something bigger, which should be on-line or in production somewhere else.

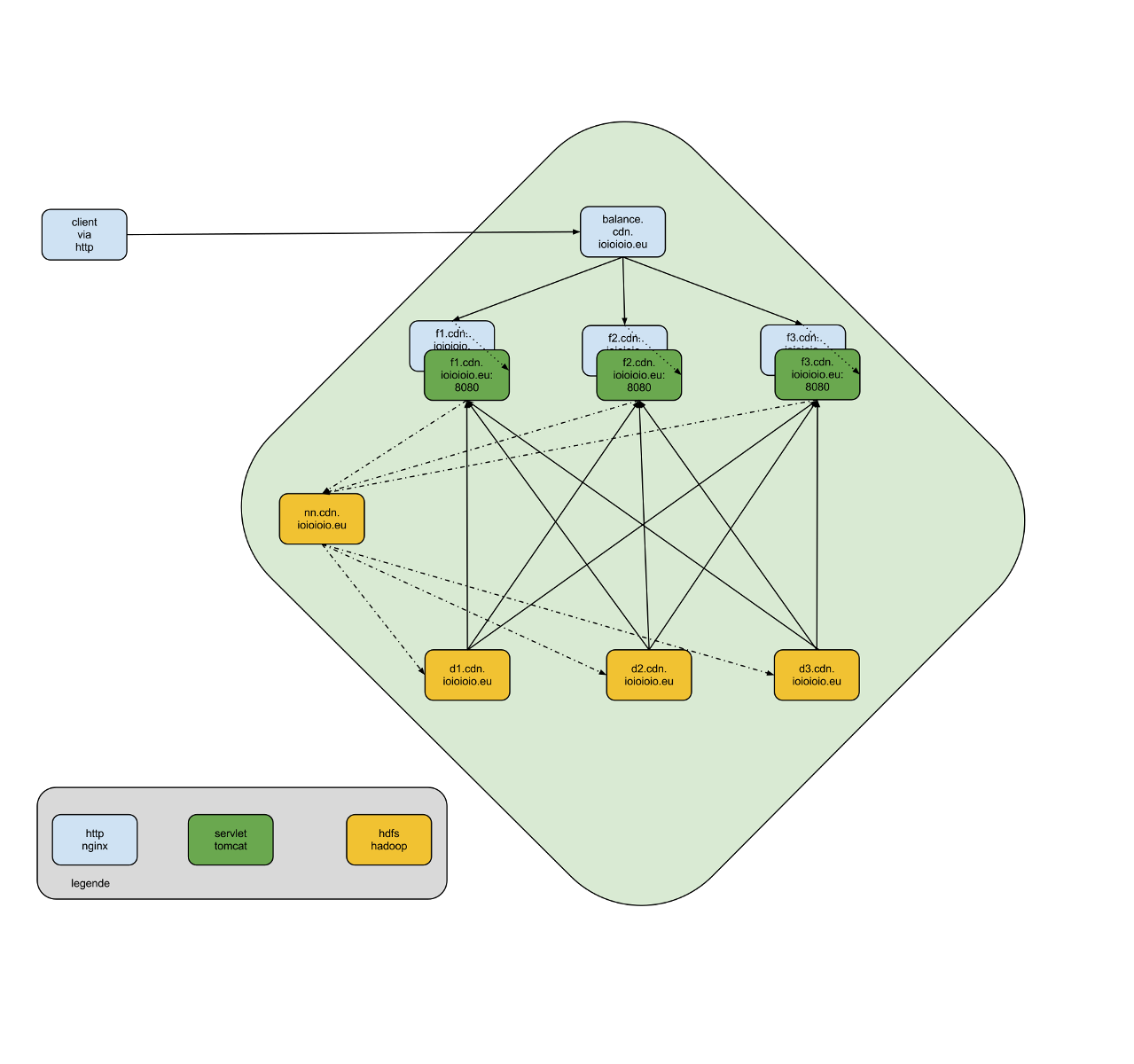

the static content is delivered by a distributed cluster, which could be seen as cloud. the files are located on different sites, in different data-centre. if one request a file, the file is build on demand. if its smaller then a particular size, its cached by the system for a while. the deliver of the least amount of bytes to the user, is a consequence of serving the most with the smallest machine. during the development here, the reduction from some content, e.g. site, is reduced from - lets say - 500 kilobyte to some 150 to 300 bytes, depending to the amount of objects in a page. the request is taken on server-side and with some techniques, the requested material is probably already on the customers side. if so, the response is immediately back on client-side, telling the browser the details.

if one takes action and dynamically request something, the response is taken and the result will be rendered on the cloud-drive, then delivered as static content. that leads to a minimum on server time and a maximum performance on the response time, back to the client. uploading, adding content in general will be performed on demand. if the user saves content, the depending files for the media is generated after being processed. any request from that moment will be redirected of the static content.

service

describing the different services was the main aspect in here. we can set up everything, as long as it fits to our needs. creation, repairing and extending the hard and software parts of your life is able, with our potentials.

creating content on site, crushing keywords, registring sitemaps,

moving the database, migrating to newer version, test % approve.

finding the concrete words to a given context.

click here to learn more

latest technologies used for client and server

domain specific language, all in java

knowledge in php5, java-script, css3 as well

professional planing tools, based on uml2

diversified testing of core components

continuous integration during test<->build<->deploy

click here to learn more

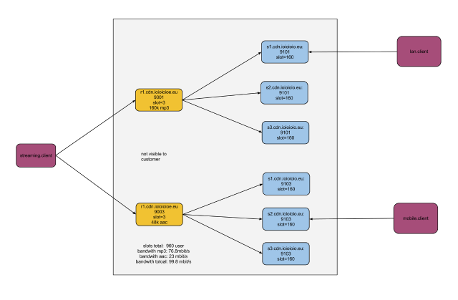

streaming connection is hidden to customer

customer connects to phallanx of secondary nodes

connection on highspeed-gateway

deliver content from different housing sites

redundant storage of files

click here to learn more

tech

developing app-like mechanics inside of a "usual browser" with html5 and latest tools, is another fact. satisfying any kind of browser and any kind of mobile device is observed during the development. having different behaviour of that devices will raise the effort massively. so, the general - minimum needed - amount of data, which fits the devices on the different platform is a core-feature of the whole thing. having a rich text editor for authoring material like this here, would be nice as well, but the stuff i´ve found, disagreed to work quickly, so a silent minute is needed therefore.

with a stopwatch one could measure and benchmark this site against any other and what ive seen is, that my old equipment with single-core processors, couple of years old can perform better, then actual machines with unequal more power. the reason is, that main-functions are build with the count-watch and lowering the needed time to an acceptable range. delivering the page with its content should be done with one second. then we can talk about features and the design of the content. its equal, the result is the happy user, that was served with lightning speed of page creation. having the dom-tree in the back of the mind, the complexity for a grown-up-app will hurt sometimes to headaches, but the macro picture must rely on that main core features. slow loading pages are not acceptable to this site, so feel free to leave a comment somehow.

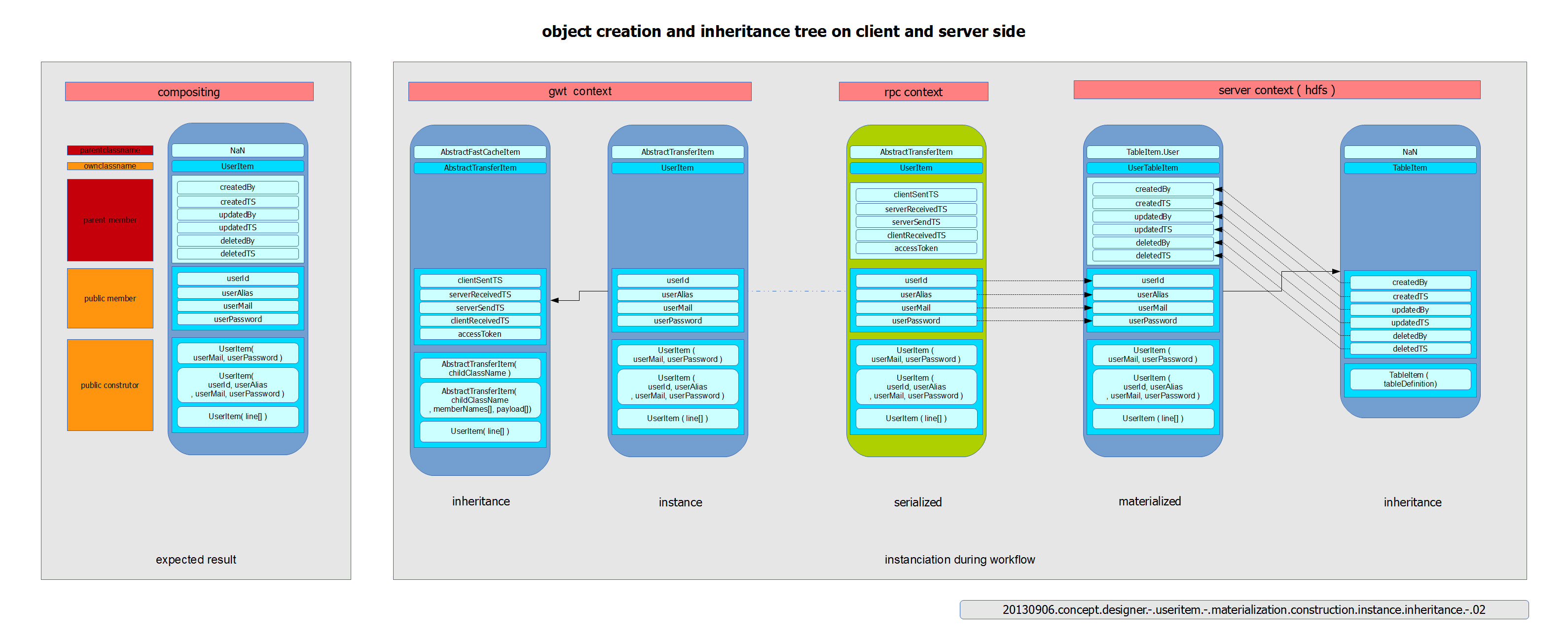

working with a simple data-model was necessary during the evolution of communication. different ideas were born in the software-world, and further more in the java-galaxy, to maintain only one model for front, back-end and transmission. data nucleus was a idea to shrink the compiled byte-code down to the minimum. but it contains annotations, some meta-data above methods or members. that happens also when java persistence api ( jpa ) or hibernate is used. the model is just usable for the non-visible part, the back-end. in the front-end, the same or abstract similar data is also used in some cases. maintaining two models is worse, when the resource to build them is not even provided. so in my case. thats why i looked for the chance, to figure out the way, to work with just one model. with google window tool-kit ( gwt ) everything is written in java. i may trigger the garbage collector ( gc ) much with that technique, could be true, but i bet some cents on the difference of productivity during the development and maintenance circles.

also relevant is the part, to log and observe the count and amount of action and events, that happen sometimes on user-interaction. measuring the invisible parts is done in the very background of the system. the near-time presentation of statistic will bring the exciting moments to the one, who see numbers raising or falling. big-data is the keyword of that behaviour, as the collection of data is necessary to optimize the user-experience over the time of existence. securing the data with the first step of handling the password is here another topic. the usage of "sha 3 keccak" is already been working as gwt-based module and on the server-side, the same way. that gives latest techniques the chance to being implemented as asymmetric encryption later on.

this page here is also there to test the composition of adobe´s shockwave flash with pure java-script, libraries for current ajax-frameworks, the design of place-holder and token to create such pages for each customer, that want to register here, early or lately. having parts directly loaded, when the page is requested is one thing, reacting on demand with pre-loading and forecasting-methods, is another one. at the moment, the content is wired hard inside the html-file. in future versions, the load of files will be triggered as late as possible. when the customer came close to a page-section, which was hidden or not visible, as it was out of focus and scrolling or linking the part, came during the page-consumption itself.

(

json

xml

rpc

byte

protobuf

)

(

http

dns

xml-rpc

xml-xhttp

rtsp

ssh

)

(

nginx webserver 0.7.x

google window toolkit 2.5.1

apache tomcat 7

apache hadoop 1.1

apache hbase 0.9

apache commons 3

apache comet 0.x

apache upload 1.x

)

projects

things we do!

click on topic to expand row ...

blog

writing within the html on bootstrap is no choice for many pages, so another wordpress to work with.

some examples of audio renderings out of weekendly sessions with the project-title:

chiptune

is a cross-mix of joint-cooperations between me and others, and others under another as well.

it took part in the widest meaning of fairly saying, thats not just the work of a single person.

some names:

the production, which takes uses of a pc based daw and a bunch of plugins on the vst and midi interface. the mixdown nor the story of each snippet there is finished, so be prepared for boring or at least interesting stuff on that subpage.

serving music and movie content for artists with the focus of independency:

dj maggio

dj whaletalker

collecting and connecting people on a infrastracture that one could call cloudddddd somehow,

but in fact, serving files for people on the net

- brute force analysis

- i/o conversion, like midi or dmx

- metering diverse measurements

repairing and pimping it the same way, in hardware.

repairing and pimping it the same way, in hardware.

two sandbox apps as portlets, the project-title: geturlxml can create downloadable links from clipboard and load data into a table.c the first app is a helper, when surfing the web and a link pops up, but not possibility to right-click and save the file. just copy->paste the url into it and a correct link will appear. the second thing was a try to make the java performance test applet results more nice, with sorted table, resizing mechanism and offloaded content for deliver on demand.

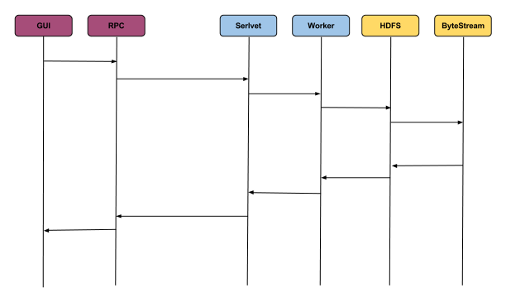

the time for a roundtrip differs on your internet-connection. dsl as example supports the user with a latency around 20-30 ms. in one direction. the same time at least, for the way of signal back the line, makes 40-60ms. as usual delay to the world. the time on the server is mostly counted in two diggits of milliseconds. cached items could be delivered within microseconds, inside the customer-context, reading it from local disc will blow the time ( its a vhost ) up to some hundret milliseconds. requesting the file from the cluster-drive could take mean times of half a second, depending on the size. were talking about items with no more then 1mb as conent, in bytes.

working on the layer of the operating system can save a lot of power

conducting programms thru batch or shell scripts as daemon or servic as well.

the software piece is currently in alpha-test, beta-test will start soon!

online: chromanova.fm

currently, no stream is relayed from here, but it should be.

staff

our complement team for success ...

(c) 1999 Jascha Buder

in his third working life, he likes to work with java mostly, after seeing its capability to work

out things for multiple tiers in a chain or process of functionality within systems. 20 years of

working and learning leads him to build a content delivery system as home-project. many cores and

many customers are satisfied with these tools and solutions.

(c) 1999 Jascha Buder

got plenty skillz from js and php over mysql, css and html. building typo3 sites behind the installation

of a virtual machine e.g. vhost, is commong to him. advancing the system with own plugins and scripts

is the daylie business. 15 years of experience is what u can guess.

(c) 1999 Jascha Buder

there is not much known about him, it could be you!

were looking for people with the capacity for

php/js/java/jee on content management / delivery sites. topics like big-data, ajax, remote procedures

or objects are familiar to you. then join our team soon and